Magazine Archive

Home -> Magazines -> Issues -> Articles in this issue -> View

Interface the Music | |

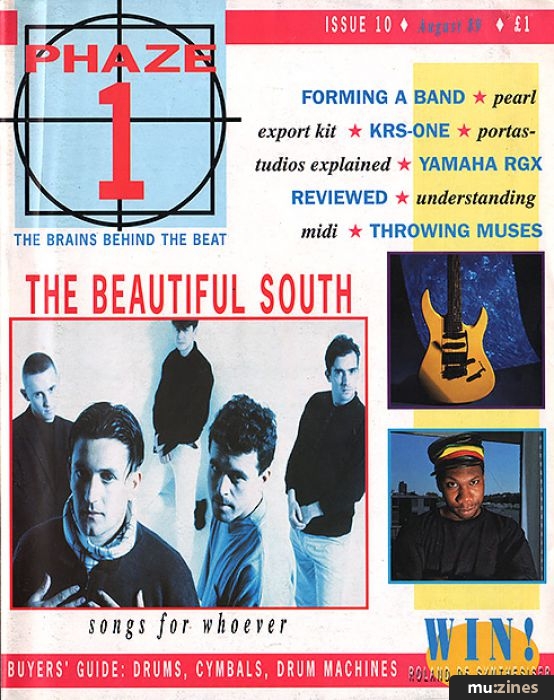

Article from Phaze 1, August 1989 | |

explaining what MIDI can do for you

Over the last few years, MIDI has developed into one of the most important tools for the creative musician. Simon Trask goes to France by mono-rail as a means of explaining why.

WHETHER YOU PLAY keyboards, guitar, drums, percussion, a wind instrument or a brass instrument - even if you don't play an instrument at all but work (or want to work) in a recording studio - some knowledge of MIDI is not just an asset, it's a must in today's hi-tech musical world. Originally intended as a means of interfacing two synthesisers, in the 6½ years since its introduction the Musical Instrument Digital Interface has spread to encompass just about every aspect of playing and recording music.

MIDI is an interfacing standard which has been agreed among all the hi-tech instrument manufacturers. This means that one manufacturer's instruments can recognise and correctly interpret MIDI data sent by another manufacturer's instruments - to put it another way, they speak the same language. Responsibility for developing the MIDI specification and for ensuring that it remains a true universally-agreed standard falls to two co-ordinating bodies: the American-based MIDI Manufacturers Association and the Japanese MIDI Standards Committee.

Put simply, MIDI is a means of transmitting performance information from one instrument to another. Taking keyboard instruments as an example, you play music by depressing and releasing keys on a keyboard, causing musical notes to sound; the resulting combination of these notes over time produces a piece of music (or even a Pet Shop Boys record!). Whereas an audio lead is used to transmit the actual music as an analogue signal, MIDI digitally transmits numeric codes which represent the physical actions involved in playing the music. When another MIDI instrument receives these codes via a MIDI lead it will respond to the notes as if they had been played on its own keyboard. The end result is that you are effectively 'layering' the sounds of two instruments from notes played on one of them; by linking up more than two keyboards you can layer more than two sounds, a feat which would clearly be impossible if you had to physically play every instrument at the same time.

To connect two MIDI instruments, run a MIDI five-pin DIN lead from one instrument's MIDI Out socket to the other's MIDI In socket (MIDI leads, which only use pins 2, 4 and 5, are preferable to standard five-pin DIN leads). It doesn't matter which end of the lead is plugged into which socket. Chaining several MIDI instruments together is accomplished by connecting the MIDI Thru socket of each slave instrument to the MIDI In of the next slave instrument. This is because MIDI Thru retransmits data received at an instrument's MIDI In socket, while MIDI Out transmits data generated from an instrument's keyboard.

Today's electronic musical instruments are essentially computers which have been programmed for a specific purpose. In addition to producing its own sounds, an instrument fitted with MIDI has been programmed to transmit and/or respond to MIDI data as applicable. But how is this data transferred via MIDI? Well, imagine a monorail travelling along a tunnel from one station to another. That's the MIDI link. This monorail can only travel in one direction; another monorail travelling down another tunnel is needed for travel in the reverse direction. Now imagine that each train consists of from one to three coaches, with each coach seating up to eight passengers in single file. Trains pass along the monorail at a constant speed as and when they become filled with passengers, leaving on time (unlike another transport system I could mention).

Conceptually this is how MIDI data transfer works, but what is the data that's being transferred? Look at it like this: there are a number of different combinations of passengers and empty seats within each coach, and these combinations can be used to represent numeric values. For instance, if there were only two seats then there would be four possible combinations: no passengers, two passengers, one passenger in the first seat, or one passenger in the second seat. Each extra seat doubles the number of possible combinations, so that with eight seats there are 256 possible combinations. In computer terms this method of counting is known as binary arithmetic, each 'seat' is known as a bit, eight 'seats' are known as a byte, and the two groups of four 'seats' are known as upper and lower nybbles (I kid you not). It just so happens that each bit in computer memory is capable of representing one of two possible states (effectively, on or off).

MIDI has two types of byte: status and data. A status byte represents the action that is to be carried out, such as Note On or Note Off, while data bytes carry associated values, such as the number of the note and its associated velocity value (see below). But how are the two types of byte distinguished? Well, a status byte is indicated by having its most significant bit set (in our monorail example, the eighth passenger seat is occupied), while, logically enough, a data byte is indicated by having its most significant bit not set (this means data values can go up to 128). When you play a note on the keyboard of a synth, a microprocessor which is constantly scanning the keyboard picks up what the note is (eg. Middle C is 60) and, if the keyboard is dynamic, with what velocity (strength) the note was hit. The instrument will then not only play the note using its own sound-generating capability but transmit it via MIDI. This takes the form of a 'three-coach train', with the first coach conveying the status byte for Note On, the second the data byte for the note number, and the third the data byte for the velocity value. When the note is released on the keyboard, another three-coach train will be sent down the MIDI monorail, this time with the first status byte indicating Note Off.

Therefore when a MIDI instrument receives a Note On code followed by a note number 60 and velocity number 120 it knows that it must play middle C with a velocity of 120. Similarly, when it receives a Note Off code followed by the same note number, it knows that it must stop playing that note. Velocity is an optional feature, as not all keyboards are capable of generating it and not all instruments are capable of responding to it. However, a standard number of data bytes must always be associated with each status byte (an agreed default velocity value is substituted where a keyboard can't generate velocity values itself). When an instrument can't respond to velocity, it simply ignores the velocity information. This principle applies to all types of MIDI data (yes, there are more types than just Note On and Note Off); if a MIDI instrument hasn't been programmed to recognise something, it will ignore it. There's no obligation on manufacturers to implement every aspect of the MIDI specification on every one of their instruments; additionally, many MIDI instruments allow you to specify which MIDI data they will and won't respond to and/or generate. Using the language analogy, you don't need to know the entire French language in order to talk to a French person, but obviously the more you know the more fully you can converse.

Taking this analogy a bit further, in very best 1992 spirit let's say you're travelling to France to meet someone, but neither of you knows what the other looks like. As you emerge from Customs at the airport as part of a steady stream of people, how will your host be able to identify you? The answer is simple: you wear a nametag to identify yourself. Much the same principle is adopted in MIDI as a means of routing MIDI information to specific instruments in a chain, in that MIDI data is 'tagged' with what is called a 'channel' number (included as part of a status byte). Typically, each instrument can be set to send and receive on specific MIDI channels (1-16), so that an instrument receiving on channel eight, for instance, will ignore MIDI data tagged with a different channel number. Depending on which one of four permissible MIDI Modes a synth is set to, it can respond on all MIDI channels polyphonically (Mode one, known as Omni on/Poly mode), on a single channel monophonically (Mode two: Omni off/Mono), on a single channel polyphonically (Mode three: Omni off/Poly) or on multiple consecutive MIDI channels monophonically per channel (Mode four: Omni on/Mono). You will no doubt at some stage come across something known as Multi Mode, in which a MIDI instrument will respond on multiple MIDI channels polyphonically. Nowadays any multitimbral MIDI instrument (ie. one which is able to play multiple sounds at the same time) will be able to respond in this mode, either allocating its voices dynamically across the MIDI channels or assigning a fixed number of voices to each channel. However, after consideration by the aforementioned official MIDI bodies it was decided not to make this an official MIDI Mode; instead, Multi Mode is officially regarded as multiple Mode Threes. Phew!

By now you should understand the concept of MIDI status bytes indicating physical performance actions. The most obvious actions are Note On and Note Off, which were discussed earlier. However, there are also status bytes for conveying such information as keyboard aftertouch, pitchbend, controllers (the most common of which are modulation wheel, volume pedal and sustain pedal) and patch (ie. sound) changes. Whereas these are known as Channel commands, MIDI also has a series of System commands which, as you might guess, are not tied down to any particular MIDI channel. Best-known is probably System Exclusive, which is distinct from all the other MIDI commands in that it allows each manufacturer to transfer information specific to their own instruments. Typically, SysEx is used to transfer patch data between two units of the same model, or between an instrument and computer-based editor/librarian packages (often an invaluable asset). Each manufacturer is allocated their own official ID number, which should appear at the head of a SysEx communication so that other manufacturers instruments know that they must ignore the data that follows.

Another System aspect of MIDI is that of synchronisation. Just as human musicians must listen to one another in order to stay in time, so sequencers and drum machines must be able to do the electronic equivalent of listening. In this case one device must act as the tempo source while others are slaved from it. MIDI comes to the rescue by providing not only a means of synchronisation but also a means of conveying tempo changes. As part of the agreed MIDI standard, 24 'timing bytes' are transmitted at equal intervals during the course of a quarter note, referenced to the tempo of the controlling device; the slaved device (eg. a drum machine) will lock its own tempo to the rate at which the clock bytes are received. Obviously whenever the tempo changes on the master device, the rate at which the timing bytes are sent will alter accordingly, and consequently so will the tempo of the slaved device.

MIDI can also send a code which means Start, another which means Stop, and another which means Continue. Associated with the latter is a code known as a Song Position Pointer, which stores with it a value indicating the current position in a sequence, again to a standardised resolution. When a sequencer or drum machine that can respond to the, Song Position Pointer code (don't presume that all can) receives a position value, it adjusts its position accordingly; a subsequently-received Continue code then starts it playing from that position. All these codes are known as System information because they don't have channel tags - they're intended for all devices which can respond to them.

SO NOW WE have an interfacing system which can transmit, down a single MIDI lead, a complete musical performance together with commands necessary for synchronising sequencers and drum machines. Not only this, but you can combine MIDI instruments from different manufacturers in the knowledge that they will work together.

Which is just as it should be, you might think, but it wasn't always like this. Back in the seventies, when synths were analogue and monophonic, a much simpler interfacing system prevailed. Known as CV/Gate, it required two cables to convey the necessary information, one for pitch and one for duration. The pitch was conveyed as a voltage level (hence CV, or Control Voltage) to the slave instrument's VCO (Voltage Controlled Oscillator) while the duration was conveyed as an on/off Gate signal to the slave instrument's VCA (Voltage Controlled Amplifier), turning the continuous oscillator output signal on or off. Clearly only one note could be active at a time with this system, but because synths were monophonic anyway, this didn't seem like too much of a problem. More problematic was the fact that manufacturers couldn't agree on a single interfacing standard for the CV and Gate signals, so it couldn't be taken as read that a synth from one manufacturer would work with a synth from another.

Similarly, a system existed for synchronising drum machines and sequencers which used the pulses-per-quarter-note method, but manufacturers couldn't agree on how many pulses per quarter note. Thus Roland used 24 while Korg used 48 and Oberheim used 96; hooking up Korg and Oberheim drum machines would cause the former to run twice as fast as the latter.

The reason why all these inconsistencies existed was that each manufacturer wanted musicians to stick with their products and theirs alone; the perception that the industry as a whole could benefit from a standardised interfacing system didn't exist.

Of course musicians didn't want to stick with just one manufacturers' instruments, and consequently enterprising companies like Garfield Electronics and JL Cooper developed sophisticated 'black boxes' to overcome the interfacing problems, but they were hardly an ideal solution to what was a significant problem for the industry. In the late seventies the advent of the polyphonic synthesiser and of digital microprocessor control of analogue synths, together with the manufacturers' realisation that the lack of a universal interfacing standard was harming rather than helping their industry, laid the ground for MIDI.

In fact the first step in the development of what was to become MIDI can be traced back to 1980 and the now-defunct American synth manufacturer Sequential Circuits Inc. The company developed a digital interface system specifically for hooking up the keyboard of their Prophet 10 synth with its onboard sequencer, and released the spec for general discussion. Subsequent talks held between SCI, Oberheim and Roland revealed a shared interest in the interfacing problem, and at the Fall 1981 Audio Engineering Society convention, SCI President Dave Smith presented a paper which outlined a Universal Synthesiser Interface. This paper stated that the USI was "designed to enable interconnecting synthesisers, sequencers and home computers with an industrywide standard interface". The USI spec was intended as a preliminary specification to stimulate discussion within the industry, which is exactly what it did. A meeting between a number of American and Japanese manufacturers in January 1982 at the American NAMM convention added some further developments to the spec. Following this meeting, some of the Japanese companies presented their own specification, which offered a more sophisticated data structure than the USI. Smith and fellow SCI employee Chet Wood then integrated the USI and Japanese specs, and after several exchanges between them and Roland, who also served as liaison with Yamaha, Korg and Kawai, the initial MIDI specification was arrived at. The development of MIDI was first made public by Bob Moog in his October 1982 column in Keyboard magazine, while in December 1982 Sequential released the Prophet 600 synthesiser, the first commercially-available instrument to include MIDI.

While the MMA and the JMSC are responsible for all updates to the MIDI 1.0 spec, the official body for public dissemination of information on MIDI is the Los Angeles-based International MIDI Association, which anyone can join as an Individual member for $55 per year (there are also Manufacturer and Institutional categories of membership). Individual membership includes subscription to the monthly IMA Bulletin, access to the IMA Hotline (if you don't mind ringing America, that is!), access to System Exclusive codes and a sign-up discount for the PAN computer bulletin board.

Updates to the MIDI 1.0 spec in the years following its introduction have mainly been concerned with increasing the variety and flexibility of the MIDI Controllers part of the spec. However, there have also been more substantial additions which have been developed without altering the structure of the 1.0 spec in any way. There's now a MIDI Sample Dump Standard for transmitting sample data via MIDI; MIDI Time Code, which provides a means of transmitting SMPTE timecode and associated cueing information in a MIDI format; and MIDI Files, which provides a generic format for saving MIDI sequences to disk (though not, as yet, via MIDI) so that they can be transferred between different programs on the same computer.

Right, that's enough of the historical and theoretical stuff. Next month we'll look at the many and varied applications of MIDI in the real world of music and beyond.

IMA - The International MIDI Association (Contact Details)

More with this topic

Virtual or Reality |

The Integration Game - Improving your MIDI Environment (Part 1) |

Protocol (Part 1) |

Media Link |

The MIDI 1.0 Specification |

MIDI Product Guide |

MIDI - The Absolute Basics (Part 1) |

Adventures In MIDILand (Part 1) |

Real Time MIDI |

Mixing Essentials - Mixing in the MIDI Age (Part 1) |

Technically Speaking |

MIDI Evaluated - info available |

Browse by Topic:

MIDI

Publisher: Phaze 1 - Phaze 1 Publishing

The current copyright owner/s of this content may differ from the originally published copyright notice.

More details on copyright ownership...

Feature by Simon Trask

Help Support The Things You Love

mu:zines is the result of thousands of hours of effort, and will require many thousands more going forward to reach our goals of getting all this content online.

If you value this resource, you can support this project - it really helps!

Donations for May 2026

Issues donated this month: 0

New issues that have been donated or scanned for us this month.

Funds donated this month: £0.00

All donations and support are gratefully appreciated - thank you.

Magazines Needed - Can You Help?

Do you have any of these magazine issues?

If so, and you can donate, lend or scan them to help complete our archive, please get in touch via the Contribute page - thanks!