Magazine Archive

Home -> Magazines -> Issues -> Articles in this issue -> View

Conference Blues | |

ICMC ReportArticle from Music Technology, February 1987 | |

Fresh from his exploits at Steim Studios, Ron Briefel takes a look at what last year's International Computer Music Conference had to offer in the way of new instruments, new music, and new ideas.

Last year's International Computer Music Conference showcased some striking concerts and the usual crop of new technological developments. But are academic composers devoting too much time to the process, and not enough to the result?

'Empty Chair' was the title of a piece by George Lewis that used an

AFTER A PEACEFUL and none too demanding couple of days at the Steim symposium (see report in last month's MT), I braced myself for what I knew would be an intensive and relentless onslaught of heavy-duty music research and even heavier-duty music at the International Computer Music Conference in The Hague.

Faced with an awesome schedule of double and triple paralleled sessions, I would surely get weighed down just trying to decide which ones to go to. After all, I knew I daren't miss anything worthwhile - I had to write a review of this whole thing!

So, armed with Walkman, thick notepad and camera, I took my place in the conference arena.

One of the first sessions at the Conference was devoted to notation and the graphical representation of music by computer. Some impressive traditional notation software was outlined by Dan Timis of IRCAM, and also by Donald Byrd.

But most interest centred around new interactive graphical notation and representation software. Peter Desain, for example, gave a paper outlining an ingenious graphics-based configuration and editing facility for driving a digital signal/event processor hardware device.

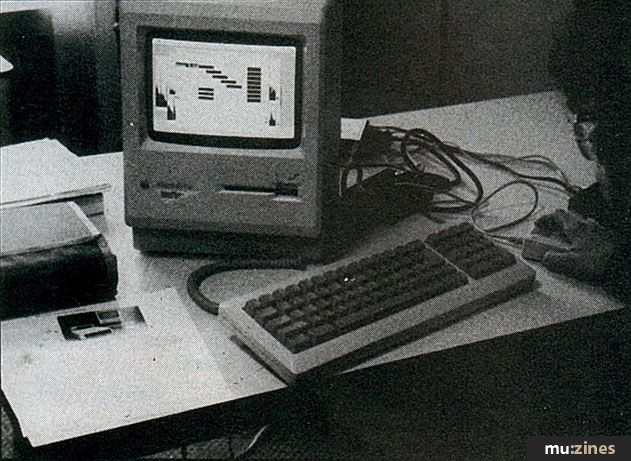

Most impressive though was MacMix, a software package for the Macintosh written by Adrian Freed for graphically representing, editing, mixing and processing digital audio and sampled data sound files. It can be used with a wide variety of hardware, so long as the correct communications protocol is set up. The software sends out storage and retrieval messages such as Record, Play and Edit commands and, of course, mix and amplitude control information. Slices of sounds are stacked vertically on the screen as layered sound blocks, each of which has its own amplitude envelope, gain setting and "in" and "out" times.

All these parameters are graphically represented and can be varied by using the mouse. Sound blocks can also be shifted back and forth in time simply by dragging the mouse sideways. The blocks themselves can represent anything from a microsound to a long period of continuous sound.

You can audition the sounds as you are working on them for immediate aural feedback, and facilities also exist for incorporating spectral analysis and modification of sound blocks - though you need some external hardware to do this.

All in all, a rather impressive piece of software. It's currently being used extensively at IRCAM in Paris to edit and mix large sound files, while a company in the States has manufactured a CD quality storage/retrieval hardware device for use with the software. It should also be available suitably formatted for use with various commercial sampler units, and with luck, it will also get ported to run on the Atari ST.

Discussions after the papers centred around the need to develop more integrated multiple-notation systems that incorporate the best traditional notation software with the new graphical representation systems such as those by Adrian Freed and Peter Desain.

As much information as possible available on screen or instantaneously switchable is what is called for. Standards for porting and cross-compiling different software were therefore also discussed.

There were a number of papers on the synthesis of complex audio spectra. The most impressive of these was presented by Joseph Mark and John Polito from Stanford. Using data reduction/analysis techniques on recorded piano tones, they have derived a synthesis model which is a complex combination of FM, additive synthesis and filtering.

They played examples of their model realised on a large computer system at Stanford, and I must say that down to the finest detail (such as hammer noise) it was best synthesised piano sound I've ever heard - and better than most samplers, too. All it needs now is for Yamaha or somebody to put it onto a chip and ... yep, want one.

Undoubtedly, there were representatives from commercial companies present at the ICMC this year, but unfortunately the presentations by them that I attended were a bit disappointing.

Roland, for example, laid on just about the most spectacular display of hardware seen at the whole conference. They installed huge rack units filled with every available rack-mounting Roland device, plus all the keyboards and a full complement of IBM composing and performing software. But alas, it was all more like a showroom than a conference presentation.

Floris Kolvenbach, the man responsible for the showroom, spent most of the available time dishing out superlatives. Very little technical description and evaluation (of the sort that have made past ICMC presentations by commercial companies so successful) took place at all.

An afternoon session on networking at ICMC was to provide lots of proposals, but not very many concrete decisions. Proposals for combining MIDI, SMPTE software downloading and sound file porting were all discussed, as well as possible ways of incorporating them into a Local Area Network (LAN) for musicians to communicate with each other. (See end of feature for contact address).

Present at the conference from Britain was a contingent of composers from York University, who are developing an interesting music workstation and networking system for the Atari ST. It'll include a lot of digital audio processing and editing facilities, as well as interactive composition and performance programs. They are already in close consultation with British Telecom over their networking proposals, and with luck, well be able to bring you more details about the project shortly.

Other Brits present at the conference were Chris Jordan and Dr Kevin Jones, who both gave impressive demonstrations on the Music 5000 system for the BBC micro (see review in last month's MT). There were several rivals to the 5000 at the conference - like a rather clever system from New Yorker David Rayna - but it was a good opportunity to compare notes.

Just part of the equipment line-up used by Roland as part of their computer music seminar. IBM-based systems formed the basis of the demo, but it could have been more informative

A large chunk of the conference (and its music) was to deal with new input languages and interactive input controllers for composition and performance. MIDI-Lisp and M-LOGO are two closely related languages that have been used in this area and were described at the conference. They can be used for both real and non-real time processing and manipulation of sound files, including MIDI data files. Graphical icons, windows and menus as well as actual physical objects such as MIDI keyboards and custom-built input devices are used to activate processes and access pre-programmed functions.

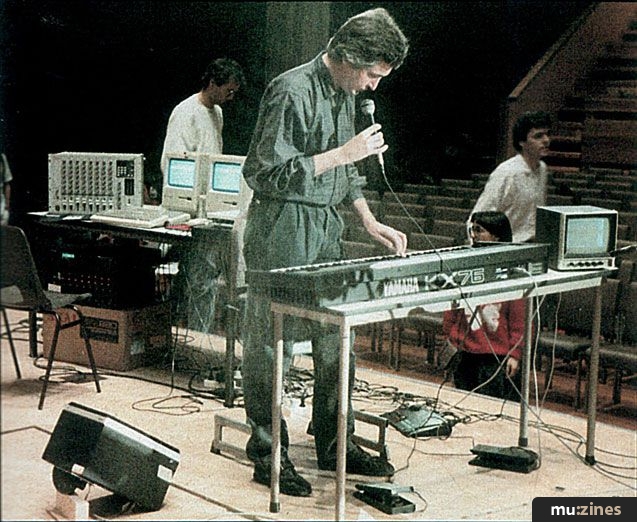

David Wessel of IRCAM's small systems development team outlined the way he uses MIDI-Lisp in a piece that was to be performed at the conference. A Fairlight Voicetracker is used to extract pitch, rhythmic and dynamic information from a live saxophone played by Roscoe Mitchell. The information is converted to MIDI and fed to a Macintosh running MIDI-Lisp. A Yamaha KX76 keyboard manned by Wessel himself is connected to the computer and acts as a performance controller.

The system is programmed so that the lower half of the keyboard activates MIDI data Record commands. So whenever a key in this region is pressed, whatever MIDI data is coming in gets recorded until the key is released. The block of MIDI data associated with that key can then be transformed, augmented or processed in some way and is accessed at corresponding "play" keys on the upper part of the keyboard. The actual sounds are produced via a bank of MIDI instruments including Yamaha TX816, Akai S900 and Yamaha SPX90.

So the transformed/manipulated MIDI data is re-injected into the performance by the "keyboard player". MIDI-Lisp provides enormous possibilities for this interaction between live instrumentalist and "live event processor".

However, the concert itself showed that while the system is fascinating in its process capabilities, its aural results are less impressive. Most of the interest seemed to be in the relationship between what was controlling and what was being controlled, and with the identification and appreciation of processes for their own sake.

This certainly wasn't the case for Michel Waisviz from Steim, whose piece 'Touch Monkeys' made brilliant use of a set input controllers called (imaginatively) The Hands. This system was first described in a paper at the 1985 ICMC in Vancouver and outlined in my report in E&MM December'85.

The Hands are literally attachments to a musician's hands. They consist of plates containing a complex network of switches and touch-sensitive surfaces. They also incorporate a sonar transmitter/receiver pair so that when the performer (Waisviz) moves his arms apart, a varying "hand displacement" signal is generated.

David Wessel used sophisticated arrangement of two Macintosh computers, a Yamaha TX816 rack and an Akai MPX820 MIDI mixer as part of his ICMC concert performance

All the signals generated by The Hands are converted into stipulated MIDI codes, which are then inputted to a computer-driven MIDI playback system - in this case two TX816 racks. The incoming data can trigger pre-programmed sequences or be subjected to event processing and transformation, just like David Wessel's MIDI- Lisp system. And via System Exclusive programming, the input signals can access voicing and function control parameters on the TX816s.

So in performance, the sonar "hand displacement" signal for example is mapped to the "data slide" controls of the TX816, which in turn access different parameters of the internal voices of each module. When Waisviz opens out his arms, he activates a massive layered cluster of sound which is dramatically distributed over a surround-sound loudspeaker system - each TX816 module (TF1) seemingly having its own speaker.

There were powerful moments aplenty in the performance. The DX voices used were the richest and most un-DX7-like I've ever heard, and this, together with the visual drama of seeing the sounds being generated, processed and distributed from the performer's movements on stage, helped to make 'Touch Monkeys' the musical high point of the whole conference.

It was also the best piece of music I've heard for a very, very long time.

There were several other systems that worked along similar lines, though. For example, Phillipe Menard's Synchronos made use of light-beam sensing and movement as input control. This system was quite pretty, and even dramatic when performed upon, but didn't approach The Hands for sheer impact.

Another system called Formula runs on the Atari ST, and was demonstrated at the conference by its developer, Ron Knivela. It's basically a versatile FORTH language-based program for generating and manipulating MIDI data. Incoming MIDI data can also be processed and can interact with internally generated MIDI data. The software is available on request (a small outlay to cover expenses might be required) from (Contact Details) - but you'll need to learn FORTH to use it.

Software writer Adrian Freed demonstrating his MacMix program, one of many music systems based on Apple Macintosh computer demonstrated at ICMC

FORTH was also the language used by Joel Ryan from Steim to program an orchestra of ten Yamaha CX5 computers for a performance of a piece by George Lewis called 'Empty Chair'.

Once again, music being played by a live musician (this time Douglas Ewart on clarinet, sax and bamboo flutes) is MIDI converted into a Macintosh that then sends out control data to the CX5 orchestra - which duly responds. I wasn't too impressed with the CX5 "orchestra sound". Maybe it wasn't quite working as well as it should have been, but it sounded just like a single DX7 most of the time.

Luckily, the piece was saved by a good light show and by a strange concurrent and interactive video performance from Steve Potts.

Finally on the musical front, there were some interesting pieces that made use of mechanically played instruments.

To start with, there were Dan Carney and Alex Bersten. These are the men responsible for convincing Bosendorfer to wire up one of their pianos to a computer - hence the system described in last month's MT. They are also responsible for several other wired-up efforts, including vibraphone and percussion instruments. At the ICMC they "performed" a rather frenetic series of pieces that made full use of their instruments - all playing together at virtuoso speeds. But in the end it came over as being a bit flashy and inconsequential - another case of the system being more interesting than the music, really. Clarence Barlow, on the other hand, performed a piece on the mechanical piano that was more subtle and musically rewarding. He started off by playing some mellow and romantic classical music, but gradually, pre-programmed mechanical outbursts started to take over, until finally Barlow was forced to retire while the piano rejoiced with a beautifully quirky and rhythmic variety of systems music.

There were a number of other musically enjoyable moments at the conference, but mostly I found myself dulled and disappointed.

I was hoping to find out some of the reasons for this by attending one of the last panel discussions of the conference. This was about Education in Computer Music, and the subheading "adjusting to the changing definition of computer music" sounded promising. However. I was dismayed when (for instance) the panel - responding to a question on the problem of the enormous "knowledge explosion" in computer music - all immediately assumed that the more you know, the better a composer/performer you'll be. In their eyes, the problem has more to do with "efficiency and clarity of instruction" than anything else.

Maybe if the discussion had centred on the very nature of the "knowledge explosion" in computer music and especially its existence within a social (and political/economic) musical framework, it would have been more interesting. As the performances at ICMC showed, a lot of computer music being produced today is bound up with research and academic concerns, rather than purely musical ones. If the discussions had been angled more acutely, they might have done something to change that.

One way out might be through the establishment of new forms of research, together with educational centres that are not so strongly linked to the traditional music college approach. Give these centres stronger links with equipment manufacturers, not to mention radio stations, record and film companies, and they could give equal encouragement to all musical approaches - not just the western classical one.

Holland itself is making promising headway in this direction. Both Steim Studios and a new facility set up in Utrecht by Johan den Biggelaar (an ex-Steim man, no less), who gave a talk at ICMC, are well on the way to presenting viable alternatives to many of the existing computer music structures, approaches and "knowledge bases" - whatever that may mean.

Technical details of the proposals discussed at ICMC are too complex to present here, but anyone wishing to find out more can send off for a six-page document outlining some goals and a recent discussion of proposed new standards. The address to send to: (Contact Details)

Publisher: Music Technology - Music Maker Publications (UK), Future Publishing.

The current copyright owner/s of this content may differ from the originally published copyright notice.

More details on copyright ownership...

Show Report by Ron Briefel

Previous article in this issue:

Next article in this issue:

Help Support The Things You Love

mu:zines is the result of thousands of hours of effort, and will require many thousands more going forward to reach our goals of getting all this content online.

If you value this resource, you can support this project - it really helps!

Donations for June 2026

Issues donated this month: 0

New issues that have been donated or scanned for us this month.

Funds donated this month: £0.00

All donations and support are gratefully appreciated - thank you.

Magazines Needed - Can You Help?

Do you have any of these magazine issues?

If so, and you can donate, lend or scan them to help complete our archive, please get in touch via the Contribute page - thanks!