Magazine Archive

Home -> Magazines -> Issues -> Articles in this issue -> View

All About Additive (Part 1) | |

Article from Music Technology, April 1988 | |

In the first of a two-part series, Chris Meyer looks into the workings of additive synthesis and how it can be used to accurately mimic naturally-occurring sounds.

What is additive synthesis? This two-part series explains it, and what separates it - and resynthesis - from other synthesis techniques.

Image credit: Toby Goodyer

A QUESTION: WHAT is sound made of? You can consider sound to be made up of layers called harmonics or partials. These determine the character of a sound with their presence and their amplitude, with respect to the fundamental pitch (or fundamental frequency) of the sound (in the case of pitched sounds). The process of manipulating sounds by controlling these harmonics is known as additive synthesis.

Pitch, and Timbre

LETS START WITH the common terms "pitch" and "frequency". We tend to use them interchangeably. Low frequencies or pitches are, well, low, and high ones are, er, high. To separate them, you need an understanding of timbre. Try this experiment: say the word "Hawaii" very slowly, taking pains to maintain the same pitch (in other words, in a monotone). Although the pitch should have stayed constant, each syllable will have sounded different. This is because each syllable had a different timbre - in other words, each one was built from a different combination of harmonics. For example, the last syllable ("eeee") has higher frequencies present than, say, the first one ("huhhh"), even though they have the same fundamental pitch. Basically, a sound can be broken down into combinations of frequencies. The fundamental pitch of the sound is often referred to simply as the "fundamental", and the others become "harmonics".

Harmonics

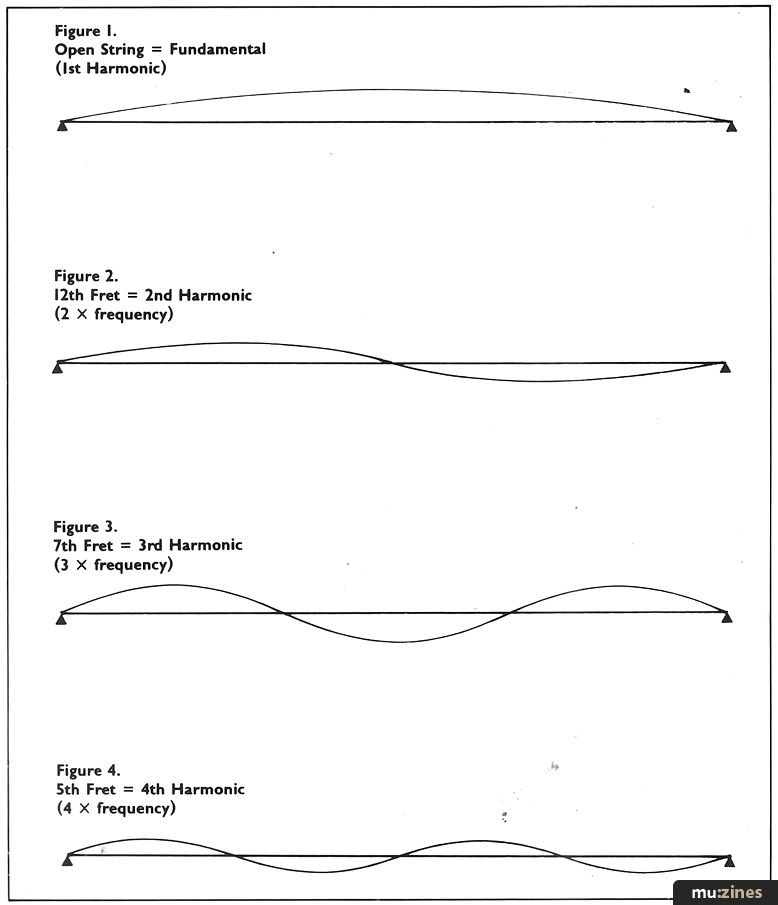

TO FIND OUT how these frequencies are related to the fundamental, let's take a look at the way a guitar string produces sound. It's secured at its ends so that when plucked, it vibrates. The resultant movement is that the string tries to move one way and then the other (see Figure 1). This movement produces the fundamental frequency of the sound. A second movement occurs where one half of the string is trying to move one way and the other half the other way, with the middle acting as a pivot (see Figure 2). This produces a frequency twice that of the fundamental. Those of you who play guitar know that by damping a string over the 12th fret (the half-way mark), a "harmonic" is produced that's one octave above that of the open string. This is called the "second harmonic", and is twice the frequency of the fundamental.

A third movement of the string is where the middle is moving one way, and parts of the string on either side are moving the other around pivot points at third-way points along the string (see Figure 3). This relates to the harmonic produced at the seventh fret (oddly enough, the third-way mark), which is an octave and a fifth above the open string. It's actually three times the fundamental frequency, and so is the third harmonic. The fourth harmonic is produced by the string dividing itself into four equal sections and vibrating at four times the fundamental (Figure 4). And so it continues, with each harmonic being progressively quieter than the last.

This behaviour is not peculiar to guitar strings; anything that vibrates has a certain relationship of frequencies between its fundamental and its harmonics. For example, pipes - organ pipes, wind instruments and so on - build up patterns similar to the captive string. This relationship is referred to as "the integer harmonic series" because the frequencies of the harmonics are integer multiples (1, 2, 3, 4...) of the fundamental frequency.

The question that remains is, if most instruments have the same harmonic series, why do they sound different? Because the relative "loudness" or amplitude of the harmonics is different. Different combinations of the harmonic series produce different timbres.

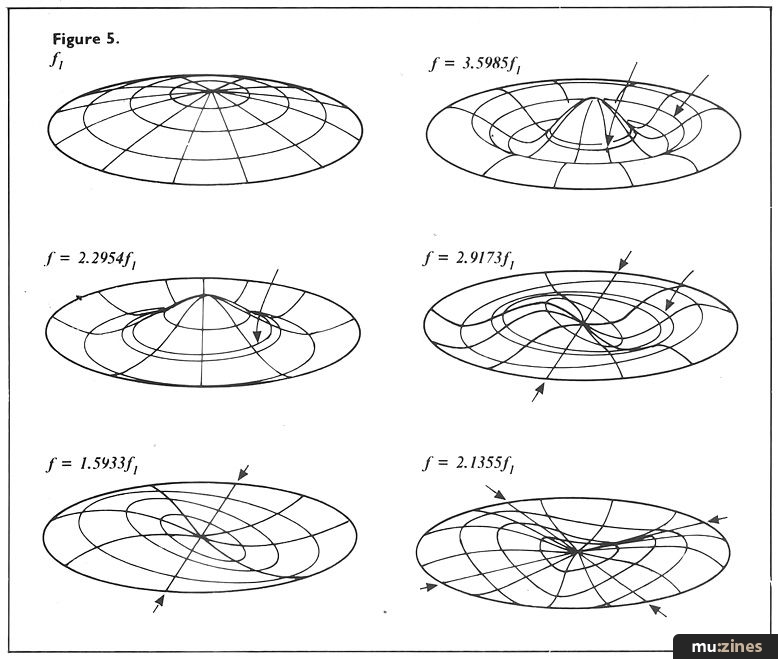

Some instruments do not have an integer harmonic and thus sound different. The most common exceptions are vibrating membranes (drum heads, cymbals, manhole covers) - their relationship follows a series of fundamental, 1.5933 times the fundamental, 2.1355 times, 2.2954 times, 2.9173 times, 3.5985 times and so on (see Figure 5).

The timbre of a sound is the final result of its harmonic series and the mix of its harmonics. From this point, it's just a small leap to work backwards and see that the timbre of any sound can be broken down and described in those terms.

A synthesiser waveform is also very similar to the vibrating guitar string. A sine wave is an example of a perfectly pure tone - its fundamental pitch is the only harmonic present. Now think of one cycle of a wave as being bounded on each end, like our guitar string; one cycle of a sine wave is the first harmonic, a pair of them between the same end points forms the second harmonic (twice the frequency of the fundamental), three give us the third harmonic, and so on.

A square wave is characterised by odd-numbered harmonics (first, third, fifth, seventh, ad infinitum). Their mix is the inverse of their harmonic number (so the third harmonic is a third as loud as the fundamental). So mixing different harmonics at different levels produces different waveshapes, since different waveshapes equate to different timbres.

Figure 1. Open String = Fundamental (1st Harmonic)

Figure 2. 12th Fret = 2nd Harmonic (2 X frequency)

Figure 3. 7th Fret = 3rd Harmonic (3 X frequency)

Figure 4. 5th Fret = 4th Harmonic (4 X frequency)

Dynamic versus Static

CONGRATULATIONS - YOU HAVE just waded through a mass of physics to get to the fundamental (pardon the pun) point of additive synthesis. By adding together sine waves we can create sounds; by varying their mix and harmonic relationship we can create different timbres - in theory, any timbre you care to imagine. And, in theory, by taking care when choosing the harmonics and the mix, you can recreate any sound.

What we're able to do is build a steady tone of any timbre using simple additive synthesis. But let's face it - steady tones, no matter how decorative the harmonic series, get boring after a while. Our ears need to hear some kind of "movement". The plucked guitar string, for example, "moves" through the course of its decay - the timbre is constantly changing and evolving.

It comes down to dynamic or static waveforms. Only straight synthesiser waveforms are truly static; in the real world, each harmonic of a sound has a life of its own, rising, changing, and falling with an identity that helps form the whole.

Three things give each harmonic its individual identity; firstly, its frequency might be slightly detuned from an exact integer multiple (such as 3.02 instead of three times the fundamental). This creates a beating effect inside the sound, akin to two instruments or strings being slightly out of tune. The upper harmonics of a piano string are slightly sharp to their fundamental; this is why an acoustic piano has to be "stretch-tuned" - the upper notes are tuned slightly sharp so that their fundamentals are more in tune with the harmonics of the notes below them. Likewise, the lower notes are tuned slightly flat, so that their harmonics are in tune with the fundamentals of notes above them. Without this approach to tuning, the piano would sound out of tune. Anyone who's tried to loop a guitar or piano on a sampler has also heard the subtle phasing of detuned harmonics.

The second dynamic characteristic of a harmonic is its amplitude envelope. The mix of harmonics changes over time, modifying the timbre. This change may be gradual (such as the higher harmonics dying away on a plucked string) or wild like the start of a horn blast before it settles down to a sustained tone. These also occasionally undulate slowly during a sound, adding a bit of life as the timbre subtly changes.

A more extreme example of the harmonic mix changing is an electric guitar note building into feedback. The placement of the guitar pickups, equalisation on the amp, and resonance of the guitar's body produce harmonics that, under certain conditions, are amplified, picked up by the pickups to be amplified again... Meanwhile, the other harmonics naturally die away.

The third consideration is the pitch envelope of each harmonic. Before it settles down to a steady frequency, a harmonic may vary during the attack. This is most noticeable in horn "blips", where the pitch of the harmonics quickly falls before stabilising.

Additive Answers

NO OTHER SYNTHESIS technique has quite the power of additive. Subtractive synthesis starts with a given set of relationships between given harmonics and then removes some of them with a filter. However, the basic waveforms may not have the harmonics needed (for example, a square wave lacks even harmonics), or the filter may not be clever enough to create the exact mix we need. FM and phase distortion synthesis start with sine waves, but modulate the frequency of one with another as opposed to adding them together. This modulation often creates interesting harmonic series (including non-integer ones), but again the result might not include the harmonics or mix required, and controlling this is often difficult.

Wavetable machines have more complex basic waveshapes, but they have two main drawbacks. One; they cannot support harmonic series such as vibrating membranes (you can't fit 1.5933 sine waves into one wave without some serious distortions). Two: sounds often change in timbre over time, and if this change is more drastic than just a few higher harmonics appearing and disappearing, again, the filter may not be up to the task.

Additive synthesis is a technique that does afford control over every component of a sound. However, there are a few edges to this sword. One, not all "additive" machines may give you control over every aspect of each harmonic, thus preventing you getting as much realism as you might like. Wavetable machines may let you choose just one harmonic series to start with, and you'll then have to use multiple oscillators for detuning, filters for creating harmonic envelopes, and pitch envelopes to give the whole sound a shape. Some machines are cleverer and allow two to four waveforms (sets of harmonics) to be individually detuned, amplitude enveloped, and pitch enveloped. (Some of those grey-area "additive" machines will be explored in more detail next month.)

Figure 5.

Assuming that a machine gives you individual harmonics to play with, you must ask: do they give you enough control (such as detuning, individual amplitude envelopes)? Do they even give you enough harmonics to play with? (Eight is skimping for all but the highest frequencies or most bland of sounds: 16 will cover the majority of cases.)

Finally, if you're given all this control over sound, you have to use it. Building a sound by setting the amplitude and pitch envelopes (not to mention frequency) of each harmonic is rather laborious (a little like building a sand castle grain by grain) although some shortcuts are available.

Using FM synthesis for a few harmonics to build up larger groupings, using non-sine waveshapes for the individual harmonics (any non-sine waveshape has a harmonic series of its own, with its "fundamental" being the frequency of the harmonic), and treating groups of harmonics as blocks are all useful tricks. When it comes to frequency envelopes, although each harmonic may take a separate path in reality, it usually happens so quickly that bending them all fast enough and by the same amount covers the effect.

Resynthesis

ADDITIVE SYNTHESIS IS the process of building sounds up from scratch. Learning how to do it came from studying how to break sounds down in the first place. The whole process of breaking down and rebuilding is called resynthesis.

Resynthesis is a tricky art that has been for the most part restricted to wishful laboratory thinking. Part of the problem lies in how hard it is to put sounds back together again accurately. Having a computer break them down in the first place is even harder. Picking out all the individual harmonics and their paths from the whole is akin to being handed a city map with no street names and being asked to trace and rename all the streets. Also, real harmonics move with such complexity that it would take hundreds of envelope segments to trace them accurately. Some compromises have to be made, and the art of finding which compromises are acceptable to the ear is a study in itself.

But what use is resynthesis? In between breaking down a sound and putting it back together again, we can play with it. Some of the simplest forms of "playing" include dropping all the harmonics an octave while keeping the time evolution the same, boosting or cutting certain harmonics over others. Hybrid instruments can be created by taking the upper harmonics of one and the lower harmonics of another.

Another common technique is to take the midsection of a sound and stretch it. This technique is often referred to as "frame resynthesis", where the evolution of a sound is broken up into snapshots, or "frames", of time. Frames can then be repeated, or read back more slowly to slow a sound down.

However, hearing your own voice say "Hawaii" that much slower may not be a weighty enough trick to justify all the fuss. The next degree of control after breaking a sound down is attaching performance controls to the components before it's put back together again. Imagine taking a sample of a woodwind instrument, and having keyboard velocity or pressure change the harmonic mix of the sound. This is the pot of gold at the end of the technological rainbow. Take any sound and rearrange it however you want and put your life back into it, without the drudgery of having to do it all by yourself.

Series - "All About Additive"

Read the next part in this series:

All About Additive (Part 2)

(MT May 88)

All parts in this series:

Part 1 (Viewing) | Part 2

More with this topic

Loony Toons |

Patchwork |

It's Cee Zee! (Part 1) |

Technically Speaking |

Add Muting, Decay/Release Isolation and/or End of Cycle Triggering to Your 4740 |

Total recall - Cosmology |

'Wee Also Have Sound-Houses' |

Advanced Music Synthesis - Inside the Yamaha GS1 & GS2 |

The Lazy Guide To Good Synth Sounds |

The Synth Is Dead: Long Live The Synth |

Synthesizer Design (Part 1) |

Back to Basics (Part 1) |

Browse by Topic:

Synthesis & Sound Design

Publisher: Music Technology - Music Maker Publications (UK), Future Publishing.

The current copyright owner/s of this content may differ from the originally published copyright notice.

More details on copyright ownership...

Feature by Chris Meyer

Previous article in this issue:

Next article in this issue:

Help Support The Things You Love

mu:zines is the result of thousands of hours of effort, and will require many thousands more going forward to reach our goals of getting all this content online.

If you value this resource, you can support this project - it really helps!

Donations for May 2026

Issues donated this month: 0

New issues that have been donated or scanned for us this month.

Funds donated this month: £0.00

All donations and support are gratefully appreciated - thank you.

Magazines Needed - Can You Help?

Do you have any of these magazine issues?

If so, and you can donate, lend or scan them to help complete our archive, please get in touch via the Contribute page - thanks!