Magazine Archive

Home -> Magazines -> Issues -> Articles in this issue -> View

The User Interface | |

Article from Sound On Sound, August 1988 | |

Martin Russ explores how the user interface influences the 'feel' of a program.

Martin Russ explores how the user interface influences the 'feel' of a program.

Computers are very personal things. Everyone has their own favourite, usually based on experience of several competitors, and almost always their preferences are different from anyone else. As computers seem to be converging towards a few 'standards', the differences between models have been eroded, leaving a situation where the large majority of personal computers based upon the Intel 8086 family of microprocessors claim to be compatible with the IBM PC, with almost all other contenders being very similar 68000-based machines (Atari, Macintosh, Amiga), and with both types having mouse-driven interfaces and high resolution bit mapped graphics capabilities.

With 8-bit computers, the user tended to be satisfied if a piece of software worked! The niceties of the user interface were generally left to the function or number keys, often with little thought as to how the user would cope in reality - the systems with multiple assignments to each little rubbery keyboard button being amongst the worst. The arrival of 16-bit computers changed all this forever, not because 16 bits is necessarily better than 8 bits but because the fast and powerful processing power they offered was well suited to implementing a different type of man-machine interface. The rest is history, as they say - the WIMP environment has put Windows, Icons, Menus and mouse-driven Pointers into common use, enabling those people unable to cope with a terse 'A:>' prompt on a blank screen to use computers effectively.

Unfortunately, providing pull-down menus, dialogue boxes and other paraphernalia for the programmers has had its good side and its bad - the resulting software can either be effective and productive or frustrating and retrograde. A large percentage of how the user perceives the program is due to the appearance the program presents to the outside world - in other words, the user interface. Since user interfaces seem to crop up as a major topic in software reviews, I will try to present some measures for evaluating the effectiveness of these environments. In order to try and quantify them, I have taken several principles from current research into ergonomics and the man-machine interface and used measures which apply quite well to software that uses a WIMP environment.

MEASURES

[1] MOUSE MOVEMENT

The basic measure of this is given by Fitt's Law:

T = I * LOG2 (D/S +0.5)

where T is the amount of time it takes to move the mouse to a target position, D is the distance of the target from the current position, S is the size of the target, and I is a proportionality constant of about 100 milliseconds per bit, which corresponds to the human being's 'clock rate' for making incremental movements.

Notice that this law tells us that frequently used targets should be placed close together, so that mouse movement is minimised. How many programs have you seen which require you to keep hunting for the 'OK' box hidden somewhere on the screen (and in a different place each time!) in order to return to the main screen? This may be fun at first, but will soon give way to frustration and a loss of enthusiasm for using the program. This brings us to the second measure...

Figure 1.

[2] THE POWER LAW OF PRACTICE (PLP)

The rate of onset can be expressed as:

T(n) - T(1) * n ** (-a)

where T(n) is the time taken to move the mouse to the target on the nth trial, T(1) is thus the time on the first trial, and a is the constant which has a value of about 0.4. '**' means exponentiation, indicating that the time reduces as your muscles learn where things are. This relates to the 'muscle memory' phenomenon, which means that if a target is always in the same place, then the time to move the mouse to it is quickly minimised - how quickly can you type the word 'the' as opposed to three random letters?

In practice, this means that 'OK' boxes, etc, should always stay in the same place on the screen. Your body will learn where things are on the screen, and you should ideally find that your conscious actions focus on the task in hand, not on moving the mouse around. Your concentration will be able to work on what needs to be done, instead of having to think about how to manipulate the interface. This is very similar to the way that a sight-reading musician plays music - he concentrates on the notation, not on his hands. The control of the hands needed to play the required notes has moved away from conscious awareness and become a background activity. With a good, intuitive user environment, you should find that you need to concentrate less and less on moving the mouse, and more on what you are working towards.

Figure 2.

[3] HUMAN CYCLE TIME

Just as your ears can only resolve events in time to about one millisecond, your eyes have a similar limitation. If events happen much faster than about 50 milliseconds, then they are seen as a single event - this is why movies look like continuous movement instead of 25 separate picture frames per second. This also means that on-screen boxes or cursors should respond to mouse movements within this time. Equally, flashing cursors or boxes which want to attract our attention need to bear this timing in mind.

If events do not happen within this critical time period then we begin to notice a time delay. Even if the next action is not dependent on the completion of that event, there will still be the distraction of waiting for the feedback to show that the event has occurred. Take the example of waiting at a red traffic light - how long does it take for you to realise that the lights have failed and are not changing? It is surprising how quickly people can decide that there is 'something wrong' with the timing of commonly encountered events like this example. Even when you have decided that the lights have failed, there will still be indecision as to what action to take. The same thing happens with time delays - if there is a variation in the timing of a familiar action, then you will be slowed down because your consciousness will be diverted and distracted from its normal course of actions.

[4] RATIONALITY & PROBLEM SPACE PRINCIPLES

These relate the user's behaviour in clicking or moving the mouse (say) in response to the required goal. A simplified measure is usually made by merely counting the number of actions required to achieve a given task. It is a fact that people have problems remembering long sequences of actions when they have no clear idea of what the actions achieve.

Figure 4.

Providing a clear and unambiguous set of actions which can quickly achieve the required task can be quite difficult for the software programmer, who tends to see the problem at a much lower level than the level which is best for the user. Take the case of a simple sliding block puzzle where you need to rearrange the numbers 1 to 15 into the correct order in a 4 by 4 square (Figure 4). Ideally, you should select which number to slide into the vacant position by clicking on it with the mouse, but the programmer may be distracted by other considerations. For example, imagine the difference it would make to the program if you had to first select a number to move, then indicate in which direction you wanted to move it, and then say if you wanted any other numbers in that row or column to move with it. As you can see, the simple task of moving the numbers has been made unnecessarily more complex.

So, a user interface should aim to reduce the number of separate tasks needed, and to make each action as fast as possible. This can be greatly improved if the program anticipates your needs. For example, if you have just recorded a sequence, wouldn't it be fair to assume that you probably want to play it back to hear how it sounds? This brings us to the predictability of the user's actions...

[5] THE UNCERTAINTY PRINCIPLE (CHOICES)

This relates the time taken to make a decision to the number of available choices:

T = I * LOG2 (N + 1)

where N is the number of equally probable choices, and I is a constant of about 140 milliseconds per bit. The equation becomes more complex when the choices are not equally probable, but I have ignored this factor here. What it means is that we should either reduce the number of possibilities at each decision point, or we should try and influence the user's choice by giving him appropriate feedback about which decision he should make.

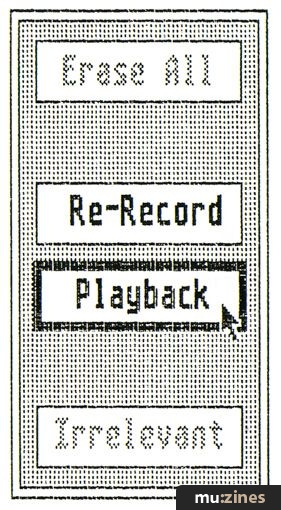

Figure 5.

In the example above, once we have recorded our sequence, wouldn't it be nice to then be presented with a button marked 'Play' at the mouse position, with 'Re-Record' and a few other options a short distance away? Equally, if a valid choice in a box is highlighted when the mouse passes over it, as in menu bars, then the attention of the user is focused on that choice; whilst dimmed or 'greyed' options can be ignored and don't distract the attention of the user. Taking Fitt's Law into consideration as well - any related choices should be positioned close together, and any 'dangerous' options, like erasing a sequence, should be positioned further away. It is the totality of all the measures described here which is important, not each in isolation.

[6] MODES

Basically, a mode occurs whenever you cannot access all of the capabilities of a program without taking an intermediate step. Obviously, such a design gets in the way of the user and should obviously be avoided, but they do often crop up. In some sequencer programs, you need to move to and fro between different screen pages to accomplish some quite ordinary manipulations, when really a single page would suffice. Similarly, some menu-based programs do not allow you to move freely from any page to any other page, and this turns the user's task into some sort of puzzle where he is trying to find the right page - very distracting.

Figure 6.

A common flaw in programs involves an action that needs to be accessible at anytime, but which is a self-contained unit. A typical example often occurs in Voice Editing software, where it is desirable to be able to try out the effect of an edit by playing a few notes. One approach would be to use the mouse to play notes - by pressing the right-hand button and moving the mouse, for example. Another approach might be to have a menu option which plays a few notes, or even a button which makes a dialogue box appear containing a mini sequencer.

Of these options, the first enables you to gauge the effects on the sound without breaking away from the task in hand, whilst the second requires the movement of the mouse to the top of the screen and then the selection of a menu option. The final approach requires moving the mouse to the button to select the sequencer dialogue box, moving the mouse again to replay the sequence, and then clicking on the 'OK' box to return to the editing task. Which of these three sets of actions would you like to repeat one hundred times?

CONCLUSIONS

To summarise, Fitt's Law measures how well related buttons are kept close together and in a consistent position; Rationality shows how well the program divides tasks into understandable and usable chunks; Choices measures the efficiency of the program at reducing your decisions and thus pointing you in the likely direction; and Modes tells you how infuriating the program is likely to be in the long run, when you discover that you have to do lots of repetitive work to achieve a simple task.

Remember that these figures will become increasingly important for serious longterm use of the software - a minor niggle at first can quickly become a major nuisance. It is very easy to be initially impressed by the intuitive nature of a WIMP environment, only to find it becoming unsatisfactory as you begin to use it in depth. If possible, when choosing a program, try and use the program to perform as real a task as you can - and most especially, use the program yourself; do not rely on the ability of a skilled demonstrator. [Note: Many of the SOS Shareware demo disks will allow you to investigate program features in your own good time at home - Ed.] Try to assess the program in terms of the major features described above, and look at screen layouts critically — you may never be able to look at a screen again without wondering why the 'OK' box is hidden away in the top left-hand corner...

Further Information:

The information contained in this article about user interfaces is based upon 'The Psychology Of Human-Computer Interaction' by Card, Moran and Newell (Lawrence Erlbaum Associates, Hillsdale, New Jersey, 1983). This is the fundamental and indispensable work on this topic - all programmers should have a copy!

Also of interest may be 'Direct Manipulation: A Step Beyond Programming Languages' by Ben Schneidermann (IEEE Computer, August 1983, pp. 57-69). I would also like to thank Tim Oren for his invaluable work 'Professional GEM' (public domain - see any US Atari BBS) which greatly increased my interest in this subject.

Publisher: Sound On Sound - SOS Publications Ltd.

The contents of this magazine are re-published here with the kind permission of SOS Publications Ltd.

The current copyright owner/s of this content may differ from the originally published copyright notice.

More details on copyright ownership...

Feedback by Martin Russ

Help Support The Things You Love

mu:zines is the result of thousands of hours of effort, and will require many thousands more going forward to reach our goals of getting all this content online.

If you value this resource, you can support this project - it really helps!

Donations for May 2026

Issues donated this month: 0

New issues that have been donated or scanned for us this month.

Funds donated this month: £0.00

All donations and support are gratefully appreciated - thank you.

Magazines Needed - Can You Help?

Do you have any of these magazine issues?

If so, and you can donate, lend or scan them to help complete our archive, please get in touch via the Contribute page - thanks!