Magazine Archive

Home -> Magazines -> Issues -> Articles in this issue -> View

A Basic Guide To Acoustic Sound Synthesis | |

Article from Sound On Sound, June 1988 | |

Rudi Cazeaux explores the nature of sound and presents some basic guidelines for synthesizing the various standard groups of acoustic instruments.

Synthesizers have come a long way since the heady days of the Sixties, when flares and platform boots were de rigueur. Now in the lean, mean Eighties, the digital revolution has arrived. The good old analogue synthesizer's user-friendly knobs have been replaced by the incremental slider and long lists of parameters. A plethora of synthesizing methods now exists and it is hard keeping up with all the latest developments. You might be forgiven for thinking that to make worthwhile music today you'd need access to a whole bunch of state of the art equipment: mega-samplers, digital reverbs, computers, drum machines and other hi-tech paraphernalia. It is all too easy to forego inventiveness and let imagination take a back seat. A slick production and the latest equipment will go some way (in some cases a long way) towards an original sound, but there are other effective ways. Let us examine the problems facing the budding synthesist.

THE PROBLEM

When a new machine is released on the market, its sonic potential is judged mainly on the strength of its presets. However, there is often much more to an instrument than the sounds it leaves the factory with. Neither the reviewer nor the customer in the shop has sufficient time to explore all of the instrument's possibilities. The original programmers try to show off the sound potential of a new instrument by incorporating as wide a range of sounds as they can muster but are limited by tight deadlines. It is left to you, the user, to get the most out of your equipment; unless you wish to rely on third-party programmers. Although it isn't possible to cover every single aspect of sound creation in this article, it should give you sufficient insight into the principles involved in sound synthesis and help you in creating your own sounds.

Sound synthesis is one of the most exciting developments in the history of music and sound making. The fact that we are now able to manipulate virtually every aspect of a sound should, in theory, lead to countless new prospects in music making. We are, however, limited by a number of factors.

First of all, hardware/software limitations... Contrary to some manufacturers' claims, there are limits to the sonic potential of their instruments. These are due to such factors as oscillator design, envelope response, harmonic spectra, etc. That is why your Juno 1 is never going to sound like a DX7, no matter how hard you try. Even a high quality sampler alters the quality of a sound it records, and different samplers each have their own characteristics. Whilst you might be able to produce a great variety of sounds on your equipment, you will find that they all share a certain 'quality' about them. This is a good thing in that it can give a particular piece of equipment its own distinct sonic personality... which is just as well for instrument manufacturers.

Another important element is ergonomics - the way in which the interface between the operator (you) and the instrument works. It is all too easy when confronted with some of the newer generation of digital synthesizers to be baffled by the array of parameters available. In the old days of the one knob per function analogue synth, it was somehow easier to relate to what happened to the sound when 'twiddling' controls. For economic reasons that age has now gone and we must make the best of the incremental slider/rotary wheel. A real help here is the large number of computer-based editing packages in which all the parameters are there, on the screen, in all their splendour for you to see and manipulate. Whilst this is of benefit, a basic understanding of the principles behind sound creation is still needed to help you in making the most of your investment.

The second factor is acceptance... Sounds go in and (mainly) out of fashion. Witness the 'syndrum': once a legend in its own lifetime, now relegated to the same place as the 14-inch flares and the sitar solo! As new instruments come onto the market it seems that they have to carry with them a collection of 'essential' factory presets in addition to genuinely new sounds. You have to have certain sounds. Vibes, marimbas, kotos and other such exotic percussion instruments, once the province of ethnic musicians, are now part and parcel of virtually any modern musician's arsenal. There is also the human tendency to play it safe and go for the 'tried and tested'. Music-making is no different in this respect. Witness the proliferation of gated snares, FM bass sounds and stu-stu-stuttering vocals. In addition, every musical genre carries its share of audio cliches - the Rhodes electric piano impersonation for soul ballads, the Fairlightflute-cum-vocal sound for TV adverts. The list is endless.

The third reason, and an important one, is based on the physics of sound itself and our brain's perception of sound. Take reverb, for instance. It is part of our everyday life. We might not be aware of it but it surrounds us and affects every single sound we hear. Deprive a sound of reverberation (a situation not normally present in nature and achievable only in an anechoic chamber) and your brain will tell you that 'something is wrong'. Without that vital clue, the ear will perceive that sound as unnatural. Another factor is the initial attack of a sound. It has been shown in experiments that the brain gathers most of its clues about the nature of a sound in the first 500 milliseconds or so of its attack phase, and that if you artificially remove the attack transients of an instrument it becomes very difficult to identify the instrument in question. Therefore, if we are to attempt to synthesize acoustic sounds or create tones which sound 'natural' to the ear, we have to bear in mind the principles of sound creation.

THE SOLUTION

How is sound created? What makes one sound different from another? Any sound we hear is the result of vibrations, that is changes in level. These vibrations are produced by various means and obey the laws of physics. Unless sustained, they will tend to dissipate. A small appreciation of the principles involved here will go a long way to understanding what makes various instruments sound different to our ear. Thus, we'll need to look at the three specific areas which form the basis of sound synthesis: pitch, amplitude and timbre.

PITCH

As mentioned earlier, all sound is transmitted through vibrations. If the vibrations are random they appear as 'noise' (ie. undefined pitch) to our ears. If the vibrations repeat regularly we perceive them as 'pitch'. What pitch is heard depends on how fast the cycle repeats. These cycles are measured as cycles per second or Hertz (Hz). Typically, the human ear responds to a range of about 15 cycles per second (ie. extremely slow) to between 15 to 20 thousand times per second (ie. ear piercingly high). Any lower frequencies are only felt as a pressure wave, any higher ones would probably give us a headache or more likely not be heard at all. Examples of unpitched sound are thunder, and some drums - which is why drummers don't need to change key whilst the rest of us do.

AMPLITUDE

The second element in describing a sound is its 'amplitude' or loudness. Loudness depends on how the vibrations are amplified. In an acoustic guitar the wooden case acts as a mechanical amplifier or resonator. It magnifies the vibrations produced by the guitar strings when they are plucked or strummed. In a solid bodied electric guitar hardly any sound seems to be produced when the guitar is not plugged in. In fact, the strings produce a similar amount of vibrations as their acoustic counterpart but the solid body of the electric guitar acts to dampen rather than amplify the string vibrations.

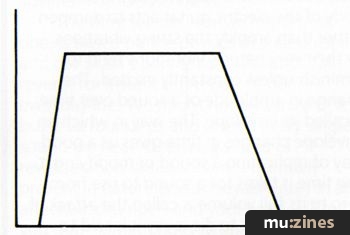

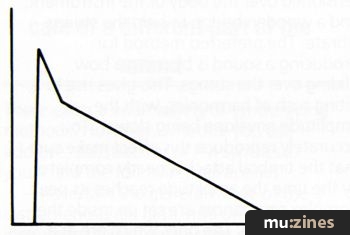

Figure 1. A simple ADSR envelope.

By their very nature, vibrations tend to diminish unless constantly excited. The change in amplitude of a sound over time is called its envelope. The way in which an envelope changes in time gives us a good way of replicating a sound or modifying it. The time it takes for a sound to rise from zero to its full volume is called the attack. If the sound starts to die away it is said to decay. Some instruments, such as the violin, can be made to sound at a more or less continuous level, and this is referred to as the sustain level. Once the sound ceases to be produced it takes a certain time to die away, and this is known as its release time. Of course, some instruments have much more complex amplitude envelopes than this and similarly sophisticated envelope generators are needed to accurately imitate them. Generally, acoustic sounds tend to have long envelopes in the lower registers and shorter ones as the pitch rises.

TIMBRE

Finally, our last area of interest is harmonic content. 'Harmonics' are vibrations which are additional to the one we perceive as the main pitch of a sound (known as the 'fundamental'). They tend to oscillate at speeds which are usually a multiple of the fundamental. So, if our main frequency was 440Hz (concert pitch), the first few harmonics would be at 880Hz, 1760Hz, 3520Hz and so on. The reason we don't hear them as different pitches is that their amplitude usually reduces the higher they go. Why are they so important if we can't hear them? Well, they contribute to the character of a sound. For example, a guitar has a very strong second harmonic which under some circumstances (such as feedback) can become stronger than the fundamental. The seventh harmonic in a piano is part of what we perceive as the 'piano sound'. Of course, there is more to it than simply the frequency of a single harmonic. The mix of harmonics, along with their respective amplitudes and frequencies, and the way they change in time is known as the timbre of a sound. The way in which harmonics evolve and change in time is known as the harmonic envelope and is often similar to its amplitude counterpart. If the harmonics are not related to the fundamental frequency of a sound they are called 'enharmonics'. Our ears perceive enharmony as clangorous or out of tune. A bell is a good example of an enharmonic sound.

"It is a sad reflection on musicians' inventiveness that so many people use their synthesizers as preset machines..."

So, to recapitulate, a sound is made of three components: its pitch, depending on how fast it vibrates; its envelope, depending on how its loudness changes over time; and its timbre or harmonic content. Armed with these three basic building blocks we can now go about synthesizing sounds.

THE METHOD

First we'll need to understand the constraints placed upon us by our instruments. Our second area is methodology, ie. how we go about creating a new sound. Finally, we need to analyse what gives a sound its own character and set about reproducing it.

To start with you'll need to familiarise yourself with the basic design and limitations of your equipment. For example, try the shortest possible settings of the envelope generators on your synth. Gradually increase the attack time only. Go through the same process with the decay and release controls. If your synthesizer includes filters, try to fully open them and then close them. Notice how they alter the timbre of a sound. Try the lowest and highest settings the oscillators are capable of. Try various detune amounts; does the detune sound natural or does it cycle and sound artificial? This could be useful for simulating chorus or flanging, for instance. There are many quirks which might be usable in virtually any instrument.

Next we need to define our method. There are many valid approaches to synthesizing an instrument. You could start and work your way from the ground up, twiddling the controls until something happens. Another method is to take a preset similar to the sound you are trying to emulate and edit it until it fits the bill. A better approach is an amalgamation of the two. What you must try to do is to analyse the way in which a sound is produced - to obtain a 'mental picture' of it - and then go about synthesizing it. Hardware-wise, you'll need oscillators and a way to alter their amplitude and timbre envelopes. There exists a wide variety of synthesizing methods. However, whether you use additive, subtractive or FM synthesis, you'll find the following still applies.

Ascertain the general range of the instrument. Is it a high, mid or low range instrument? Next, try to copy its attack. Is it slow or fast? Apply the same method to the rest of its envelope. Once you think you've got it right, work on the body of the sound. Try to emulate the instrument's timbre. You will find that you probably have to go by trial and error to get a good approximation of the tone you're after. In subtractive synthesis you'll have to use the filter envelope to get the right harmonic movement. If you use FM, then the modulator(s) will act as the timbral envelope. Casio CZ owners should use the DCW generator supplied on your synths. I have kept the example envelope diagrams very simple and stuck to an ADSR (Attack Decay Sustain Release) type envelope. They are intended to give you an idea of the basic envelope shape needed.

Earlier, I mentioned how a simple understanding of the physics of sound would help us in our synthesizing efforts. Now is the time to put this into practice. Let us examine how sounds are produced in the real world. There are, in fact, three generic families of acoustic instruments, grouped for convenience under the headings of 'wind', 'string' and 'percussion'. They are found in one form or another in virtually every culture. As always, there is some cross-fertilisation between these three main groups. For instance, the piano forte relies on hammers hitting metal strings to produce its sound and could therefore come under either the percussion or string category. The same is true of the bass guitar, which can be played very percussively. And what about the didgeridoo? Is it wind, string or percussion? I'll let you work that one out.

WIND INSTRUMENTS

The wind family encompasses a wide range of instruments such as woodwind, brass, reeds and pipes. Their basic design is very similar. Air in a column is made to vibrate by various means, including reeds, mouthpieces, the angle of the player's mouth or (in the case of a pipe organ) an air compressor. Their pitch is varied by changing the effective column length or its pressure. In the case of a flute, this is produced by stopping holes along its length; in the case of a trumpet, it is by using an arrangement of valves connecting various lengths of tube together to determine the correct pitch. A factor which affects the tone of many wind instruments is 'embouchure'. This is the technique which involves the interaction between the player and his instrument's mouthpiece. Through a player's embouchure a great range of tones and expression can be achieved. This is what makes the accurate synthesis of an instrument such as the saxophone so difficult to achieve.

PIPE ORGAN

Figure 2. Typical Pipe Organ amplitude envelope.

The pipe or church organ is one of the least complex sounds to recreate. It is produced by pipes of various lengths, usually referred to as 'ranks', of various diameters and materials through which air is pumped. Slight differences in tuning, timbre and attack combine to produce its distinctive tone.

To synthesize the rich sound of the church organ, start by creating a flute-like sound, fairly clear and pure with little harmonic content; a quick attack, no decay, full sustain and very little release. Mix in a second oscillator, producing a much richer tone, exactly one octave lower. As lower ranks take longer to 'speak', the attack time should be slightly lengthened to simulate that effect.

Likewise, use a slightly longer release to emulate the reverberation of a large hall, so that the sound lingers on. The harmonic content should remain steady and no sweep used. Use some slow detuning to add to the sound. The modulation rate should be no faster than one cycle every two seconds (0.5Hz). A little bit of white noise could be used to add some grittiness to the sound, and the higher oscillator could be tuned to a fifth.

"...listen to the now infamous 'E.Piano' preset to see how each element of the algorithm takes care of a different part of the sound..."

BRASS

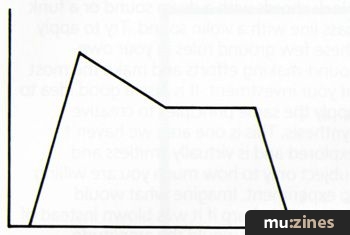

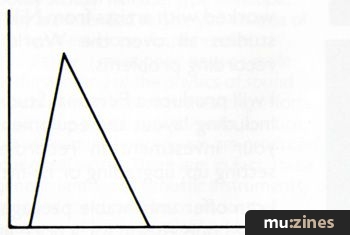

Figure 3. Typical Brass envelope.

Because of their metallic content, brass instruments have rich overtones which are brought out at higher volume. The raw tone of most brass instruments tends to be very bright and slightly buzzy. During their attack phase a characteristic 'blurt' is produced when the player overblows. To imitate this use a slowish attack with some decay and a long sustain. To reproduce the brass tone, give the harmonic envelope a slightly slower attack time to simulate the harmonic rush. It is crucial here to get the attack phase exactly right. Experiment with the rise times of the amplitude (VCA/Carrier) and timbral (VCF/Modulator) envelopes. A slight pitch waver can be introduced to make the simulation more human. Different attacks and release times will give you varying tones from funky horn sections to Baroque style trumpets or rich Vangelis-like brass tones. The lower range brass instruments tend to have slower attacks and be more muted in tone.

STRINGED INSTRUMENTS

The basic design here is a string or strings made of various materials, from animal gut to metal wire, attached to a resonator - a sound box - and most often made of wood. A string is made to vibrate in various ways, using hand, nail, quill or plectrum to pluck it, fingers to strum it, a bow to slide over it or some form of hammer to strike it. These four methods will produce a variety of sounds ranging from a harp to a violin. Once again, different techniques are available to the player. A violinist might pluck a string instead of bowing it to obtain a pizzicato tone. By manipulating the strings of his guitar in various specific locations on the fretboard, the guitarist can produce clear, bell-like tones known as harmonics. Some people have also been known to 'prepare' a grand piano by sticking knives, forks and various other objects into the string mechanism in the search for new tones.

GUITAR

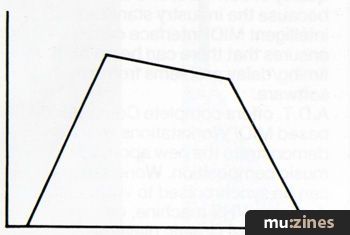

Figure 4. A plucked Guitar envelope.

The acoustic guitar is a plucked instrument. The basic tone will vary according to whether it is strung with nylon or steel strings. The amplitude envelope of a guitar has a fast attack characterised by the pick noise produced by a plectrum or the player's fingers, followed by a 'twangy' (don't you just love these technical words!) sound as the harmonics settle. The second harmonic is quite strong. The decay's length will depend on how strongly the string was plucked and the pitch at which it is played. Higher notes have a shorter decay, so use of your keyboard scaling facility is important. To add realism to the sound you could try adding squeaks and buzzes to imitate fingers sliding on the fretboard. Guitar techniques are very idiosyncratic and range from strumming to pitch-bending to using 'bottle neck' techniques. Try to simulate both a nylon and steel-strung guitar.

STRINGS

Figure 5. Strings envelope.

The string family comprises a large number of related instruments varying in size but all using the same basic design of a wooden case acting as resonator, strings tensioned over the body of the instrument, and a wooden bridge to help the strings vibrate. The preferred method for producing a sound is by using a bow gliding over the strings. This gives rise to a biting rush of harmonics, with the amplitude envelope being slower. To accurately reproduce this effect make sure that the timbral attack is nearly complete by the time the amplitude reaches its peak. Complex resonances are set up inside the body of a violin. The ones which are at a fixed frequency (affected by the size of the instrument, its finish and shape amongst other things) are known as 'formants'. The effect is similar to using a parametric equaliser to boost certain fixed frequencies. Formants are also present in vocal sounds. Vibrato (pitch modulation) is a crucial element of the string sound. It is applied by the player sliding his or her finger over the string to create a regular pitch modulation. Use a delayed LFO to reproduce this effect. There is a marked difference in tonality between when a string instrument is played on its own and when it is played as part of an ensemble. The solo violin has a very bright and biting (almost strident) tone, whereas the ensemble violin sound has a much more subdued and mellow sound. To emulate ensemble playing, use very little timbral change, a slow attack and slightly longer release. Detune and vibrato should be added, and to accurately reproduce the phasing effect of many players playing together use a chorus unit.

PERCUSSION

Our final group is that of the percussion family. These are generally subdivided into pitched and unpitched percussion. Some of the pitched group are sometimes referred to as orchestral percussion, examples being the timpani family (also known as kettle drums) and tubular bells. The percussion group is one of the richest in variety and an essential part of modern music-making.

Consider how a percussive sound is produced. A resonator is hit by means of the hand or some form of stick or mallet. Depending on the shape, size and material of the resonator (it could be anything from a clay pot to metal bars) and the speed and force of the hit, a short burst of harmonics is produced. These tend to be enharmonics and as such sound out of tune. That quick burst of harmonics tends to dissipate quickly and we are left with an after-sound which also dies away quickly.

It is essential here that your synthesizing hardware is capable of the fast rise time (attack) needed in order to successfully emulate the percussive effect.

BELLS

Figure 6. Typical amplitude envelope of a Bell.

Bell tones are one of the fascinating areas of sound synthesis. A metal bell is hit by a beater and gives rise to a lot of enharmonics which shift in time and frequency. The amplitude envelope is very simple with a very fast attack and long decay. The harmonic timbre shifts in time and so the timbre envelope should simulate that effect. Bell tones can only be reproduced by one oscillator modulating another or, if you own a wavetable synth, use a waveform with odd ratio harmonics. The oscillators could be frequency modulated but I have had good results with amplitude or cross-modulation. The key here is the tuning between the two oscillators. This should be set to a weird, non-musical interval for best results. It should be used to give rise to a series of enharmonics known as 'sidebands' to simulate the multitude of bell tones available. Experimentation is the order of the day here.

XYLOPHONE

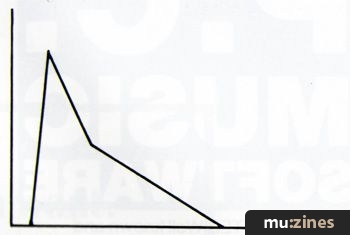

Figure 7. Fast Attack-Decay envelope of a typical Xylophone.

The essential elements of the xylophone sound are its wooden tone and quick attack. The amplitude envelope is very simple and very short. Attack, decay, no sustain. The harmonic envelope is very simple too with the mallet striking the wooden bars producing a short, hollow thud followed by a quick burst of harmonics. The timbre envelope follows the amplitude envelope almost exactly. To simulate more accurately the sound of the mallet, try adding a second oscillator exactly one octave above.

MICRO SYNTHESIS

The few examples we have discussed so far should provide you with a basic understanding of the rules of synthesis. Of course, when trying to emulate a real instrument, you must also play in a sympathetic way: it is no good playing block chords with a drum sound or a funk bass line with a violin sound. Try to apply these few ground rules in your own sound-making efforts and make the most of your investment. It is also a good idea to apply the same principles to creative synthesis. This is one area we haven't explored and is virtually limitless and subject only to how much you are willing to experiment. Imagine what would happen to a harp if it was blown instead of plucked; what would the amplitude envelope of a 25ft long grand piano be like? Try reversing envelopes, changing timbre - be bold. One of the greatest attractions of synthesizers is that you are not tied to someone else's idea of the ideal sound. It is a sad reflection on musicians' inventiveness that so many people use their synthesizers as preset machines and as a source of instantly available sounds.

One interesting development in synthesis hardware and software is the appearance of what might be termed 'micro synthesis'. Instead of trying to synthesize a whole sound in bulk, you concentrate on separate elements of it. For instance, one oscillator could produce the 'spit' of a trumpet, another the body of the sound, a third one the overtones, and yet a fourth one the player's breath. Subject, of course, to how much hardware is available. An early example of this technique was the modular synthesizer as used by Wendy (nee Walter) Carlos. Instead of being tied to a fixed oscillator-filter-envelope chain, Carlos used different modules to create some very accurate acoustic tones and some startling new ones.

This approach is now reflected in virtually all the new generation of digital synthesizers. Yamaha led the way with their algorithmic FM synthesis. Just have a listen to the now infamous 'E.Piano' preset to see how each element of the algorithm takes care of a different part of the sound: the body of the piano, the tyne bars' tinkle, the hammer thump. With LA synthesis, Roland have stored PCM samples of real instruments to provide the vital attack portion of a sound, along with looped segments and more 'traditional' synth waveforms for the body of the patch. Ensoniq pioneered a similar 'crossfade' method on their ESQ synthesizers. Kawai went all the way with their K5 synth, by providing control over every single harmonic in a sound, but are now introducing a new, less time-consuming method, very similar to the Roland and Ensoniq approach (see Kawai K1 review). No doubt this is a trend which is set to continue, and a very welcome one too.

Of course, there is more to sound synthesis than mere imitation of acoustic sounds, but imitation is the sincerest form of flattery...

More from these topics

How It Works - Tape Machines (Part 1) |

Add Muting, Decay/Release Isolation and/or End of Cycle Triggering to Your 4740 |

The Ears Have It |

Constructing A Trigger Delay |

It's Cee Zee! (Part 1) |

All About Additive (Part 1) |

Hands On: Korg M1 |

What Is Stereo |

Synth Computers |

Hands On: Yamaha DX7 |

Fun in the Waves (Part 1) |

Sonics & Harmonics |

Browse by Topic:

Sound Fundamentals

Synthesis & Sound Design

Publisher: Sound On Sound - SOS Publications Ltd.

The contents of this magazine are re-published here with the kind permission of SOS Publications Ltd.

The current copyright owner/s of this content may differ from the originally published copyright notice.

More details on copyright ownership...

Feature by Rudi Cazeaux

Help Support The Things You Love

mu:zines is the result of thousands of hours of effort, and will require many thousands more going forward to reach our goals of getting all this content online.

If you value this resource, you can support this project - it really helps!

Donations for May 2026

Issues donated this month: 0

New issues that have been donated or scanned for us this month.

Funds donated this month: £0.00

All donations and support are gratefully appreciated - thank you.

Magazines Needed - Can You Help?

Do you have any of these magazine issues?

If so, and you can donate, lend or scan them to help complete our archive, please get in touch via the Contribute page - thanks!